While a page might attract traffic on hundreds of keywords we typically expect to see most of the traffic coming from the keyword with the highest position on Google. We are going to focus our attention on only one keyword per URL, the keyword with the highest ranking (of course we can also analyze multiple combinations). We want to analyze the semantic similarity between hundreds of combinations of Titles and Keywords from one of the clients of our SEO management services.

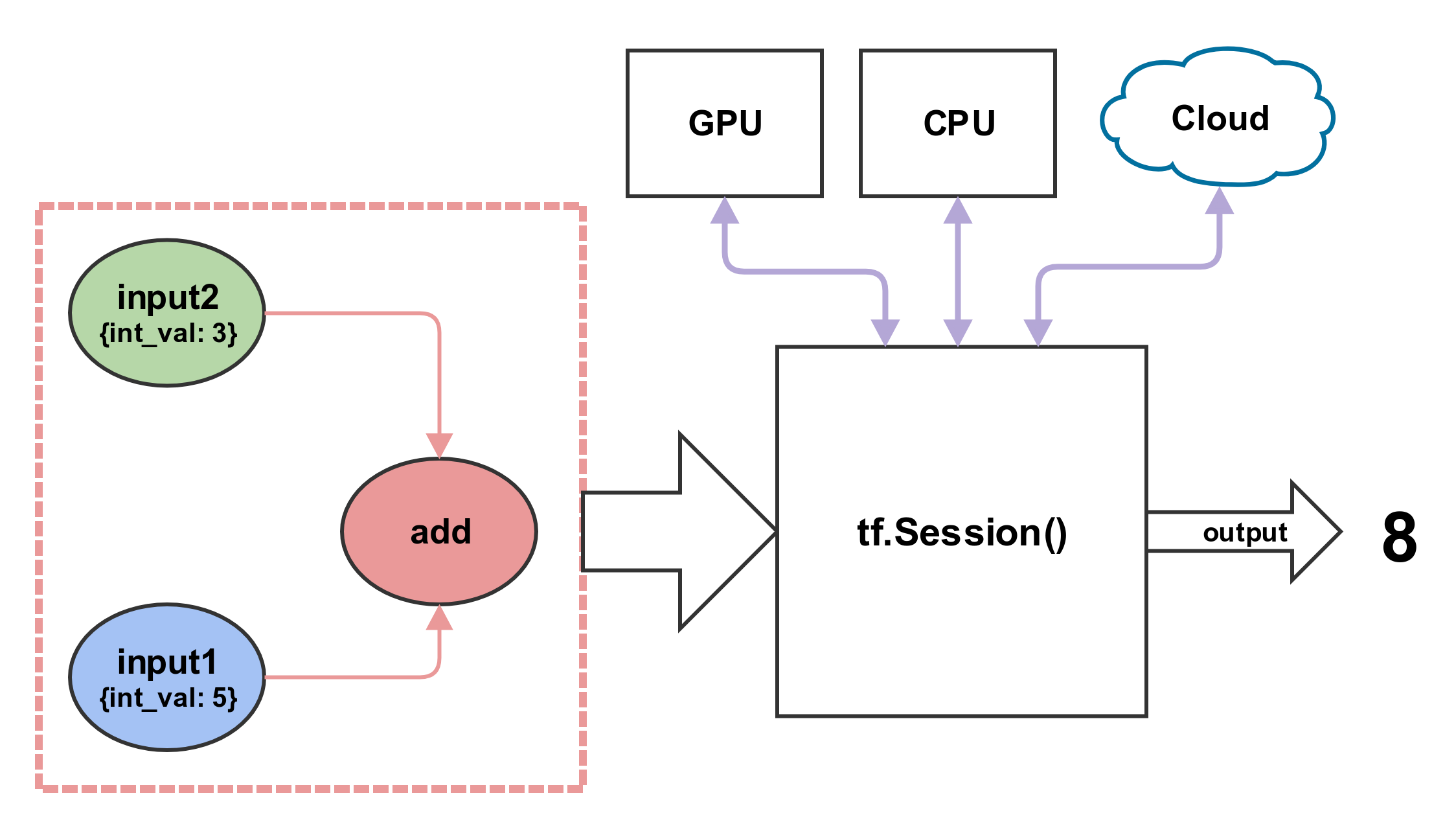

In this article, we’re going to extract embedding using the tf.Hub Universal Sentence Encoder, a pre-trained deep neural network designed to convert text into high dimensional vectors for natural language tasks. We calculate how close or distant two words are by measuring the distance between these vectors. A vector is an array of numbers of a particular dimension. Word embeddings (or text embeddings) are a type of algebraic representation of words that allows words with similar meaning to have similar mathematical representation. A very trivial, but interesting example is to, build a “ semantic tree ” (or better we should say a directed graph ) by using the Wikidata P279-property (subclass of). we can compute the distance of two terms using semantic graphs and ontologies by looking at the distance between the nodes (this is how our tool WordLift is capable of discerning if apple – in a given sentence – is the company founded by Steve Jobs or the sweet fruit).There are two main ways to compute the semantic similarity using NLP: As SEO and marketers, we can also now use AI-powered tools to create the most authoritative content for a given query. This means the search engines are no longer looking at keywords as strings of text but they are reading the true meaning that each keyword has for the searcher. “Apple” and “apple” are the same word and if I compute the difference syntactically using an algorithm like Levenshtein they will look identical, on the other hand, by analyzing the context of the phrase where the word apple is used I can “read” the true semantic meaning and find out if the word is referencing the world-famous tech company headquartered in Cupertino or the sweet forbidden fruit of Adam and Eve.Ī search engine like Google uses NLP and machine learning to find the right semantic match between the intent and the content. Semantic similarity defines the distance between terms (or documents) by analyzing their semantic meanings as opposed to looking at their syntactic form. What is semantic similarity in text mining? This is also due to the fact that a searcher is likely inclined to click when they find good semantic correspondence between the keyword used on Google and the title (along with the meta description) displayed in the search snippet of the SERP.

In the quest for relevancy a good title influence search engines only partially (it takes a lot more than just matching the title with the keyword to rank on Google) but it does have an impact especially on top ranking positions (1st and 2nd according to a study conducted a few years ago by Cognitive SEO ). They are used, combined with meta descriptions, by search engines to create the search snippet displayed in search results.”Įvery search engine’s most fundamental goal is to match the intent of the searcher by analyzing the query to find the best content on the web on that specific topic. Titles are one of the most important on-page factors for SEO. “A title tag is an HTML element that defines the title of the page. We read on Woorank a simple and clear definition. Jump directly to the code: Semantic Similarity of Keywords and Titles – a SEO task using TF-Hub Universal Encoder The result is a list of titles that can be improved on your website. We will share the results of the analysis (and the code behind) using a TensorFlow model for encoding sentences into embedding vectors. In this article, we explore how to evaluate the correspondence between title tags and the keywords that people use on Google to reach the content they need.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed